Testing basic sequences will pop the AI bubble

Following a logical chain of reasoning is essential for large language models (LLMs) to complete basic human work. Their foundational architecture is incompatible with these tasks.

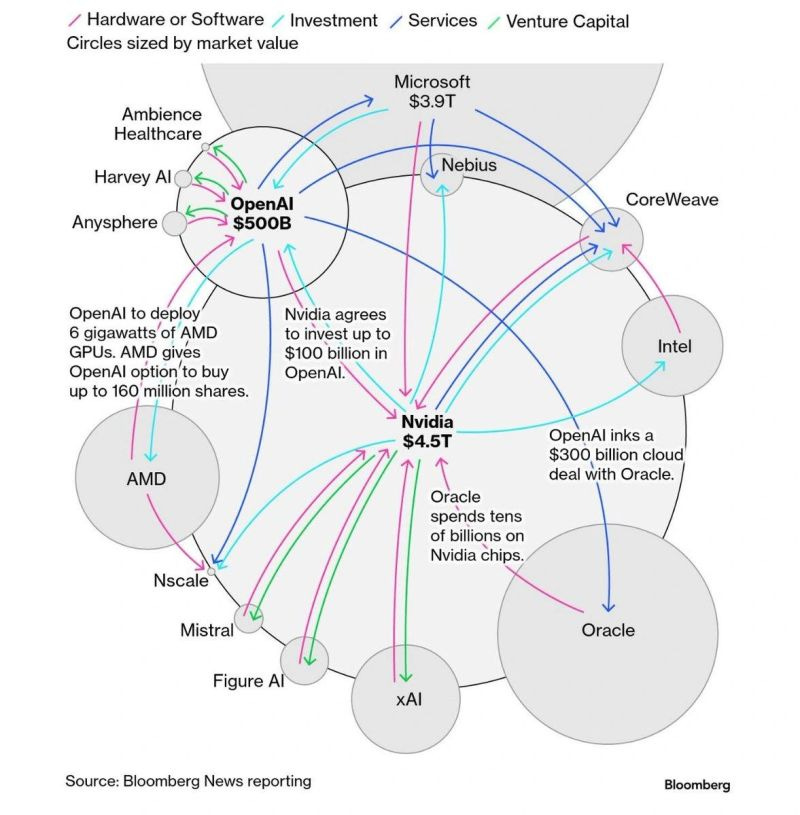

We are so far away from AI large language models (LLMs) having human-level intelligence. This is a problem because these models need to be able to complete basic human tasks to be viable and useful products.

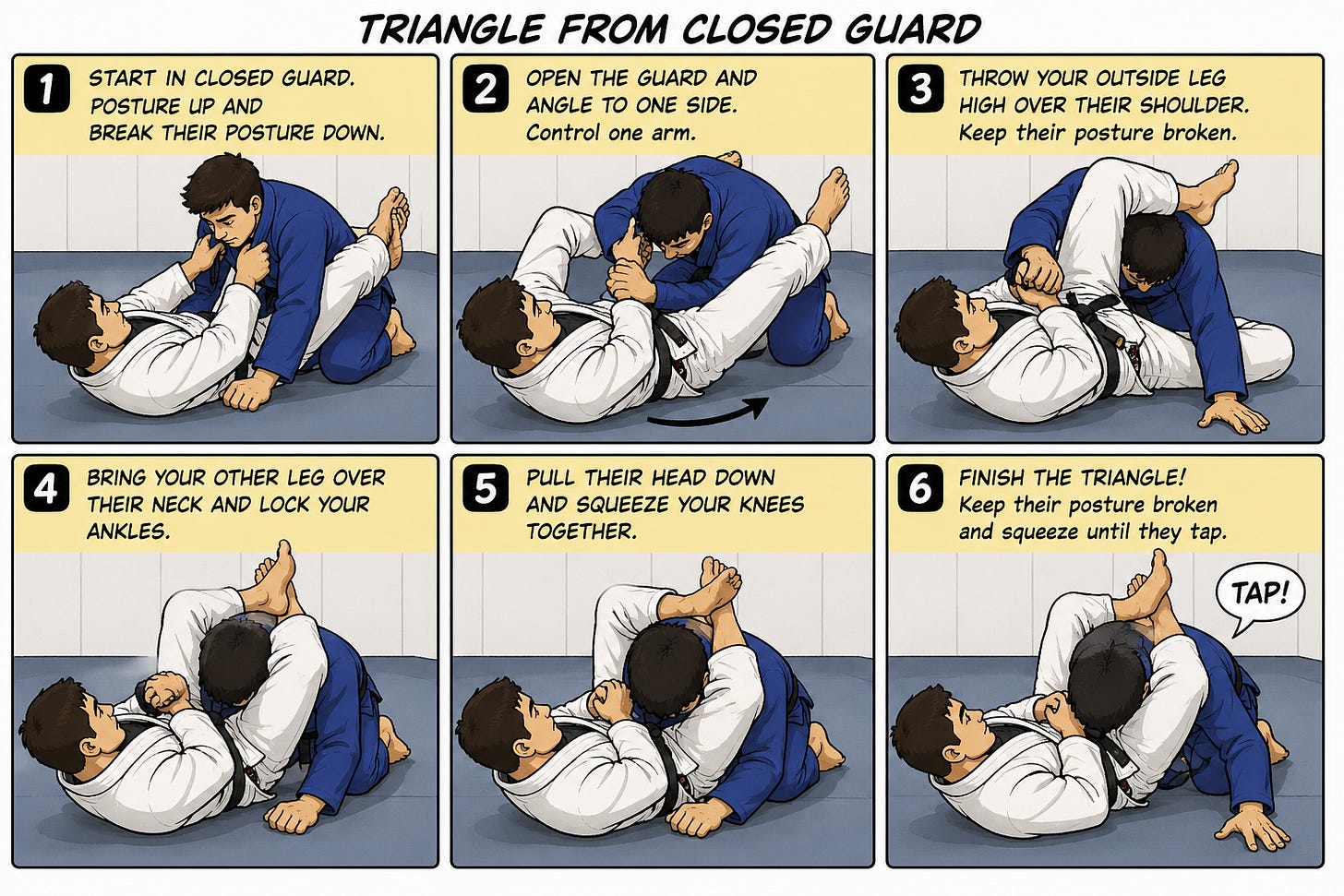

In less than 10 minutes, I can teach a 5-year-old kid or an overweight 60-year-old man how to do a triangle from closed guard. It’s one of the most basic Brazilian Jiu Jitsu moves taught to first day students during a free intro class.

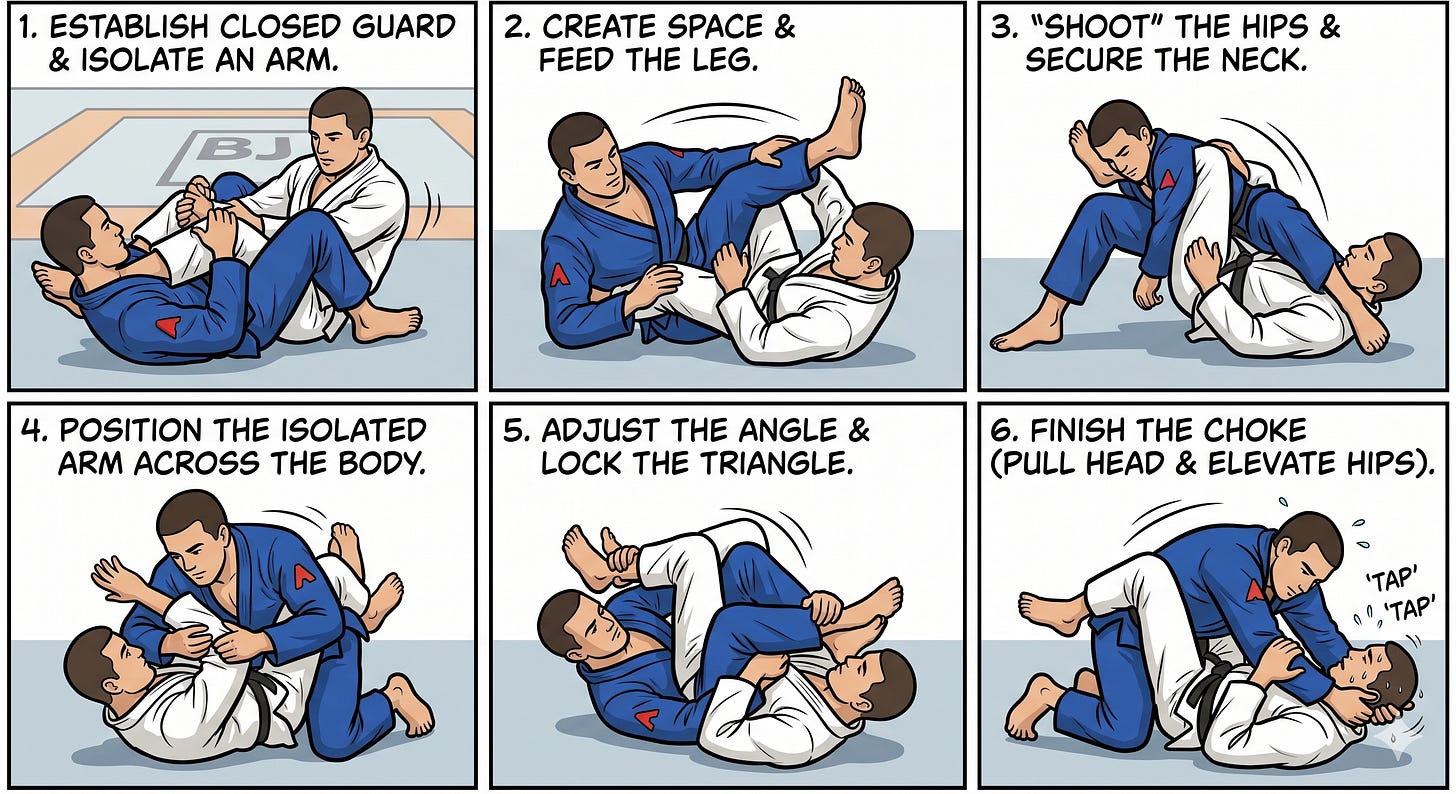

When the guy in the blue controls one of his opponent’s arms, he can throw his legs over the trapped arm to complete a choke. It’s called a “triangle” because your legs make the shape of a triangle to squeeze both sides of your opponent’s neck. It’s a very simple and effective technique used by everyone from brand new students to experienced black belts.

The internet has thousands of jiu jitsu video tutorials about basic moves like the triangle. OpenAI and Google just spent ~$250 BILLION scraping every piece of data on the internet to build and train their newest models. But ChatGPT and Gemini still can’t put 6 basic human movements into a logical order. This has huge implications for the application of AI because sequential chains of reasoning are essential to do real work.

This Google Gemini explainer makes no logical sense and doesn’t form a chain of cohesive movements from panel to panel. If you knew absolutely nothing about jiu jitsu, it might seem a little off. When you read the instructions, the steps don’t make any sense or connect to the images.

ChatGPT’s new image model looks much cleaner and would trick most people into thinking the instructions are valid. The problems start in panel 3 as the guy in white grabs the outside instead of the inside arm. Without controlling the arm that’s trapped between your legs, you can’t do a triangle. The danger with AI is outputs that seem pretty good unless you really understand the topic.

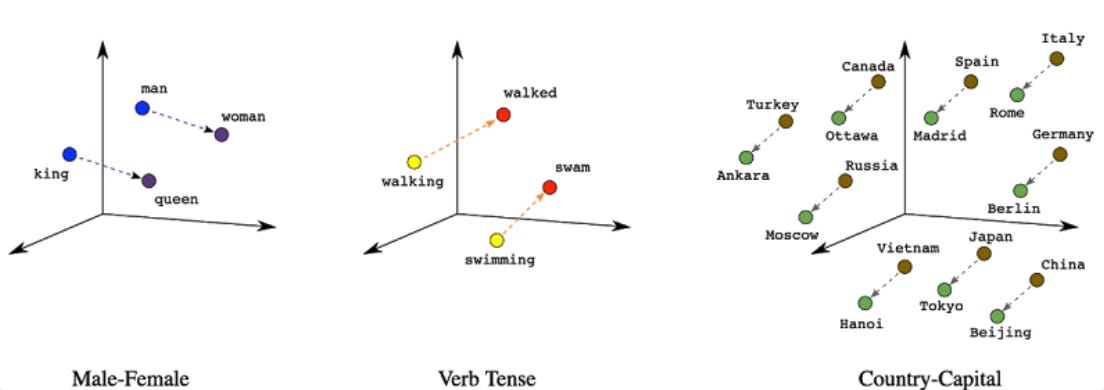

Both models got these simple sequences wrong because an LLM doesn’t “know” what a human body is or how leverage works. Scraping the entire internet has taught AI models that the word “triangle” appears near the words “guard”, “gi”, “blue”, and “arm”. These AI image generators are just matching words to pixel patterns rather than knowing something because probabilistic pattern matching isn’t spatial/mechanical reasoning.

These terrible quality BJJ explainers are exactly the same as students sending me papers with fake citations, ChatGPT telling me I can fly a plane with a 200-mile range across the Atlantic Ocean, or an AI agent deleting an entire codebase. (See more in my article: AI is making mistakes on purpose)

The transformer architecture behind ChatGPT and Gemini is a deep learning model that uses a “self-attention” mechanism to weigh the significance of different parts of an input sequence simultaneously. This process is intended to allow the model to capture complex and long-range relationships in data. Because the architecture calculates the statistical probability of words following one another—without understanding the physical mechanics of the human body or the world—it can fluently describe the “steps” of a triangle choke while being completely blind to the output being nonsensical.

LLMs aren’t just bad at Brazillian Jiu Jitsu. In this hilarious exchange, ChatGPT repeatedly tells Father Phi to walk to the car wash because a transformer model is making probabilistic guesses without actually understanding the physical world.

Unless you are an expert in the topic you are using AI for, LLM outputs can make you worse at whatever task you are doing. If you are an expert, AI can make you faster if you are able to check every part of the output (which can take more time than just doing it yourself). If there is an autonomous AI agent, the first break in the logic chain ruins the entire string of actions/outputs.

My prediction for the AI future is smart people will get slightly more efficient while not so smart people churn out useless slop they are oblivious to. These flaws are the foundation of transformer architecture, not a bug that can be fixed over time.

Think about a topic with a sequence that you know really well and ask ChatGPT some questions about it. Realizing that LLM outputs are mostly useless and these models can’t really do human work will pop the biggest investment bubble in history.

David Riedman, PhD is the creator of the K-12 School Shooting Database, Chief Data Officer at a global risk management firm, and a tenure-track professor. Listen to my podcast—Riedman Report: Risk, AI, Education & Security—or my interviews on Freakonomics Radio and the New England Journal of Medicine.