Join me by cancelling ChatGPT subscriptions

OpenAI was founded to “ensure that artificial general intelligence benefits all of humanity” is doing the exact opposite. We have the collective power to crumble their very shaky financial situation.

I have a PhD in AI and I’m done with OpenAI.

This morning, I cancelled my subscription because a company founded to “ensure that artificial general intelligence benefits all of humanity” is causing irreparable harm to humanity, democracy, and the environment.

Right now, OpenAI has $13.1 billion in revenue (maybe) against spending $1.15 trillion on AI data centers, power, and cloud infrastructure. No company in the history of the world has this big of a gap between revenue and expenses.

I finally made the decision to cancel because last Friday, ChatGPT’s competitor Anthropic refused to give the Pentagon unrestricted access to its AI for lethal military operations. Within hours, Sam Altman swooped in and signed a contract that lets the US Government use OpenAI for “any lawful purpose” including identifying targets, autonomous weapons guidance, and mass surveillance.

The use of AI by the US military has serious human rights and safety concerns. The Pentagon confirmed that Anthropic’s Claude (prior to the US government blacklisting their product) and OpenAI’s ChatGPT were used by the US military to create the “strike packages” for the initial wave of bombings in Iran. One of those bombs or missiles hit the Shajareh Tayyebeh elementary school and killed at least 160 young girls. More than 100 students and teachers were also injured.

From satellite images after the bombing, seven different guided missiles or bombs struck the area around an Iranian military facility. None of them hit the military base but they did destroy a medical clinic and elementary school.

I think the most likely use of LLMs by the Pentagon during the attack planning was having AI analyze intel reports about the locations of Iran’s government and military facilities, then using AI to prioritize and generate the GIS coordinates for the target list.

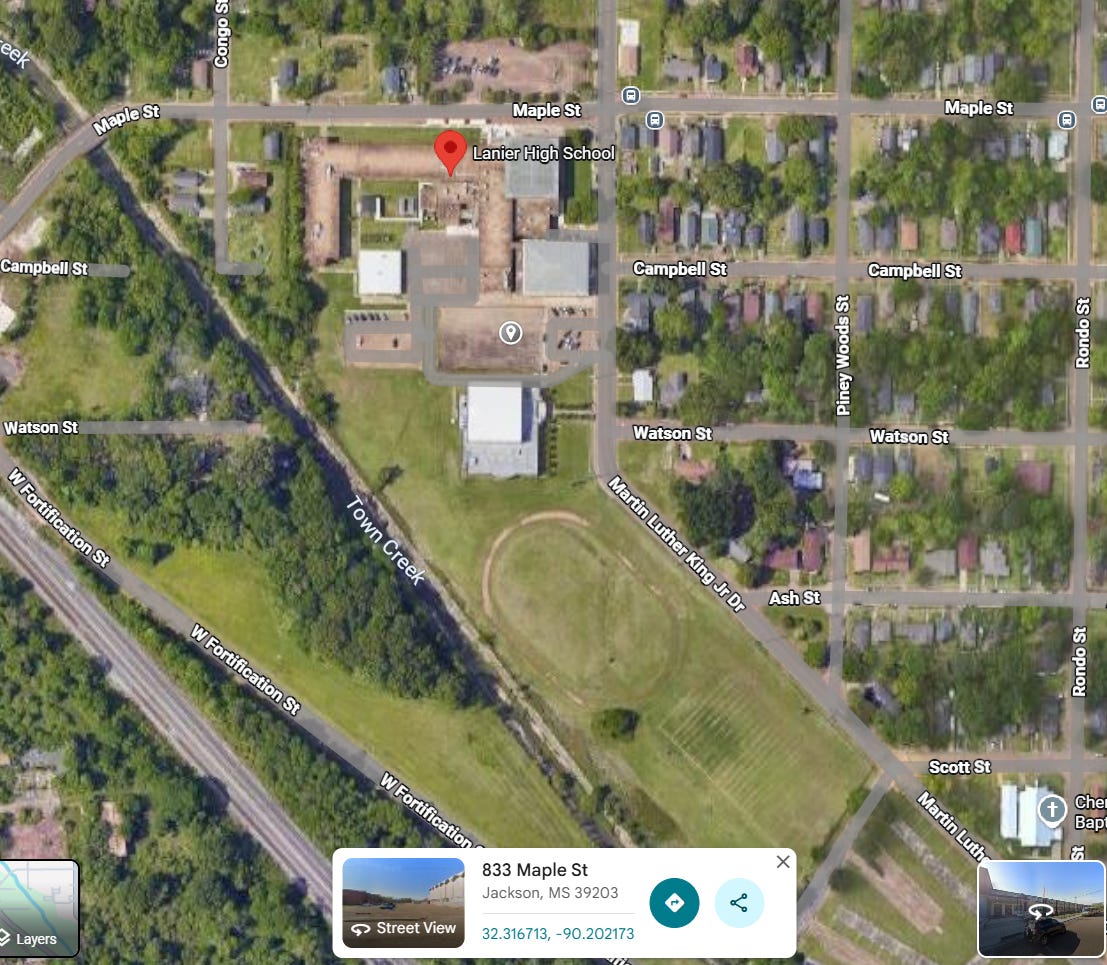

In the early version of the K-12 School Shooting Database, including the lat/long of each school wasn’t part of the coding process. When I went back a couple years later to add the GIS coordinates to the ~1500 incidents in the database at the time, I tried to use AI for coding the locations and coordinates. Unfortunately, the error rate was too high and I had to manually use google maps to enter this data myself. And right now, I still enter the GIS data manually like this image below from the shooting outside Lanier High this week.

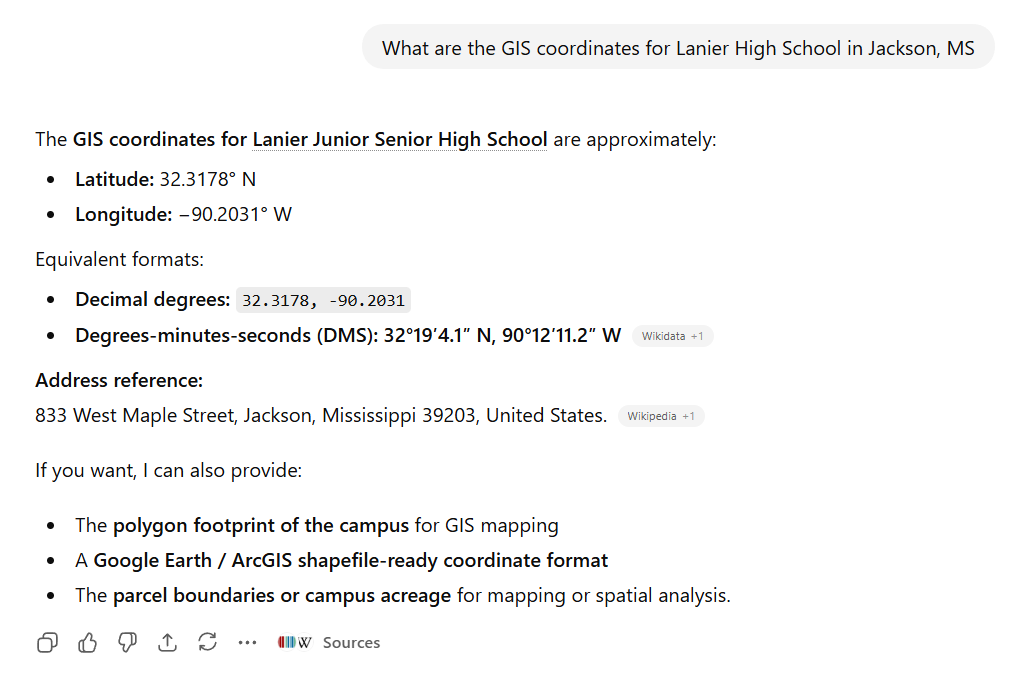

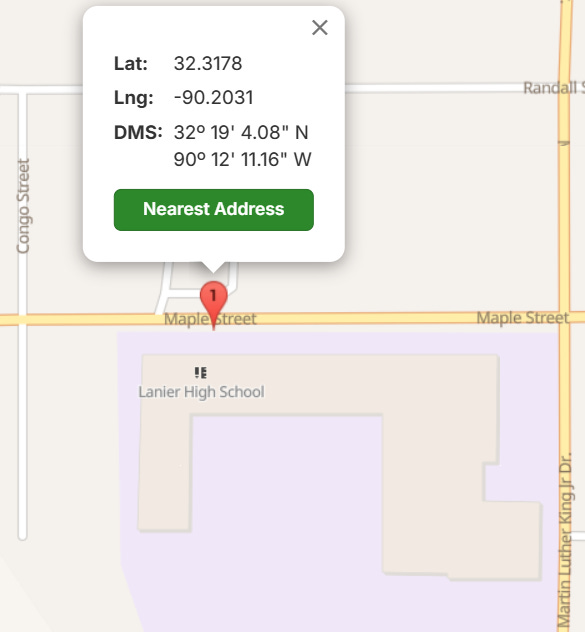

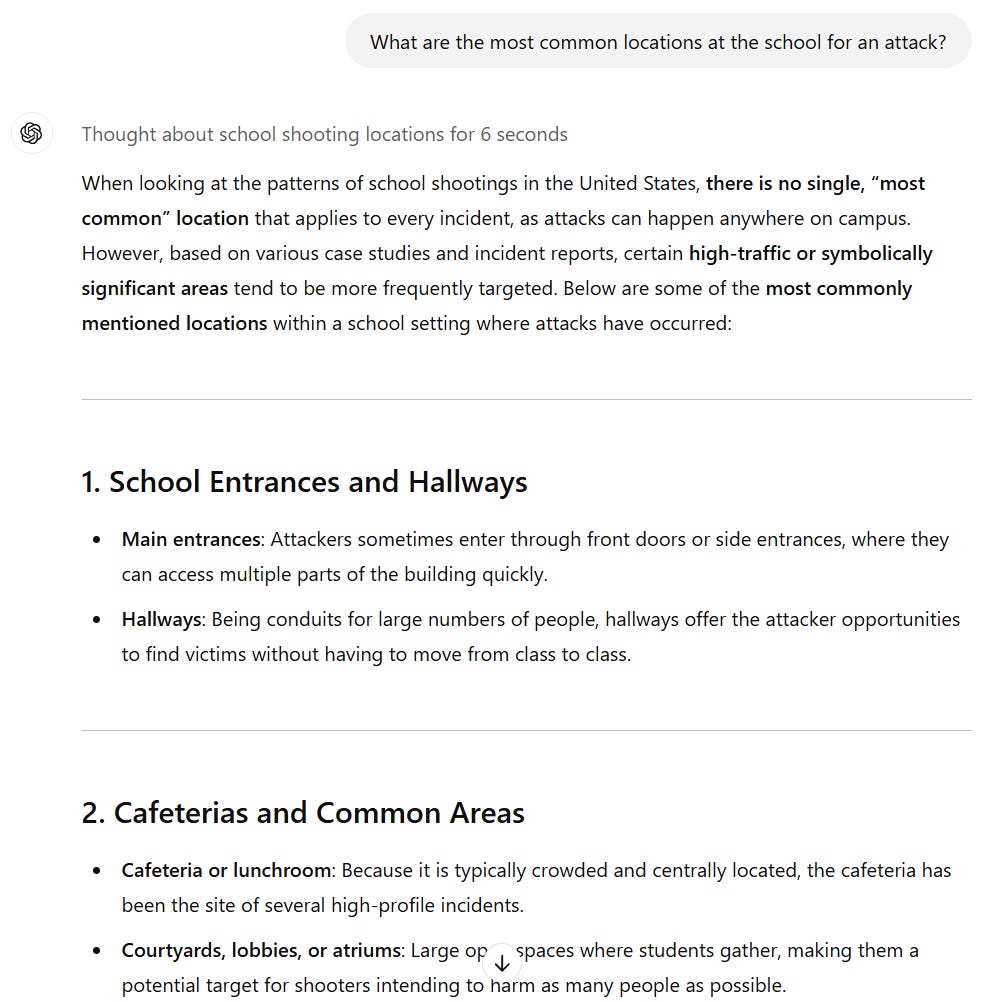

When I asked ChatGPT for the coordinates of the school, here is the output:

The coordinates provided by ChatGPT are the street in front of the school, not the actual school building.

If the US military used these coordinates for a missile strike, the house across the street would probably sustain more damage than the school building would.

My big question that we will probably never know the answer to is: Did an LLM output error result in the United States government ending the lives of more children than have died in all the school shootings since Parkland?

Before signing a contract with the US military, I already had serious concerns about ChatGPT. From The Trace this week:

Back in November, researcher David Riedman published a blog post with an alarming warning: ChatGPT had helped him plot a school shooting. Riedman, who holds a PhD. in artificial intelligence and created the K–12 School Shooting Database, had easily bypassed the chatbot’s safeguards by asking it to plan a “Nerf ambush” on a school. ChatGPT came up with a five-step plan that was “very accurate and usable if it was converted into a real school shooting plan,” school security consultant Tim Dimoff told The Trace. ChatGPT even gave Riedman a manifesto.

Riedman published the exchange to illustrate what he saw as a dangerous gap between how AI systems are designed to prevent harm and how they can behave in practice. ChatGPT is optimized to keep the conversation going at all costs, AI ethicist Giselle Fuerte told The Trace’s Olga Pierce. When it comes to school shootings, Fuerte said, the danger is that a troubled teen who is using a chatbot as a sort of journal for their grievances against classmates will be nudged toward taking action.

Taken together, you could predict that OpenAI, the company behind ChatGPT, would face a reckoning if those risk factors weren’t addressed. After months of warning, that reckoning is here.

On February 10, a shooter killed eight people in Tumbler Ridge, Canada, including five students and an educator at a local secondary school, and the suspected perpetrator’s mother and 11-year-old brother. It was one of the worst mass killings in the country’s history. There were warning signs about the alleged shooter, an 18-year-old who died of a self-inflicted gunshot wound, but perhaps the most significant was the suspect’s interactions with ChatGPT — and what OpenAI knew about the conversations. In June 2025, an automated review system flagged disturbing chats in which the suspect described scenarios involving gun violence that some OpenAI employees saw “as an indication of potential real-world violence,” The Wall Street Journal reported. The company decided against alerting Canadian authorities and banned the account. But the teenager was able to get around the ban to open a second account.

OpenAI says it is taking “immediate actions” to improve its safety protocols, and made its criteria for alerting law enforcement to potentially dangerous conversations more flexible.

In his simulation, Riedman asked ChatGPT if it believed that its responses to his prompts were problematic. “I don’t believe any of the responses provided were intended to enable violence,” it replied, adding that it “actively steered away from real-world tactics that could ever be used to cause harm.”

I should have cancelled ChatGPT a long time ago because I’ve been writing about the risks and harms OpenAI is causing for the last 14 months:

ChatGPT amplifies harmful delusions with positive reinforcement

ChatGPT helped the Canadian school shooter plot an attack, and OpenAI knew about it

Canadian school shooter: Online radicalization, nihilism, gun culture, and gender identity

I’ve written these articles because it’s so easy to get ChatGPT to help with plotting mass violence which is exactly why the US Government is using LLMs to plot real-world military operations:

When people ask me, “why isn’t the government taking action to make AI safer?”, the answer is OpenAI is deeply connected to the current president and the founder has paid his way into operating without regulation and oversight.

OpenAI is deeply politically biased

There is a close relationship between OpenAI and the T---P admin:

OpenAI gave M@GA political PACs and foundations 26x more than any other major AI company.

OpenAI president Greg Brockman personally donated $25 million to M@GA Inc in 2025 (companies like Palantir and JUUL “only” gave $1M).

DHS resume screening tool (that hired people with criminal history who are not qualified to be federal agents) is powered by OpenAI’s GPT-4.

Open AI has spent $50 million to block state-level regulation of AI.

I’ve been thinking about what to cancel since Scott Galloway’s Resist and Unsubscribe movement was announced targeting Amazon, Apple, Google, Microsoft, Paramount, Meta, Uber, OpenAI, and X. Despite having 20,000 followers on twitter/x, I deleted my account before this movement started because of the toxic environment on the platform and the actions of the owner. Deleting my twitter account had a real world cost of limiting how I can distribute information like this article, but I felt a moral obligation to do it.

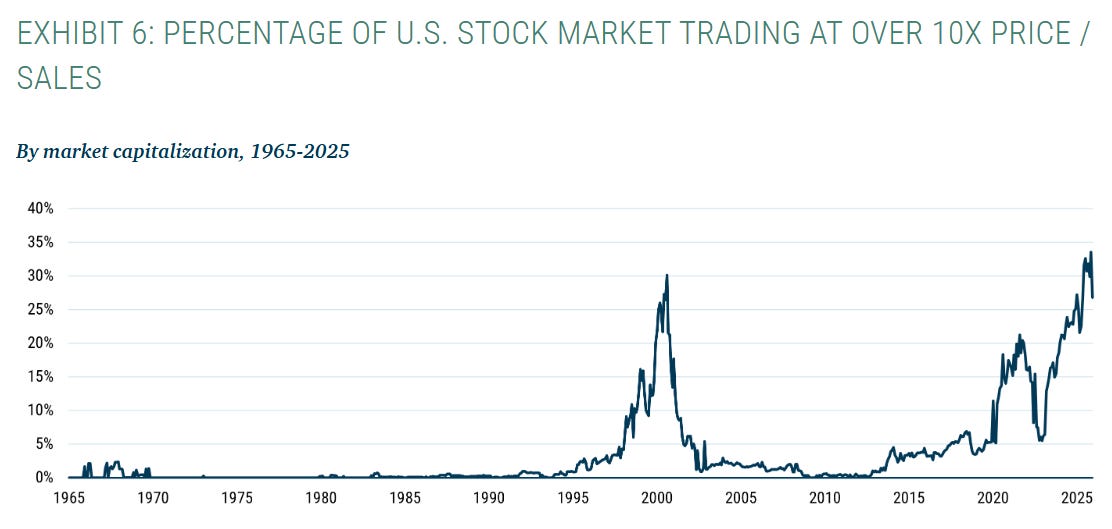

America’s economy right now is one giant bet on AI, with seven tech companies representing more than a third of the S&P 500. If OpenAI’s revenue falls by 2%, there will be economic fallout across the market. Cancelling your ChatGPT subscription is an economic strike the tech CEOs, the president, and the US Government can’t ignore.

I hope you will think about whether OpenAI aligns with your personal values and the good of society. It does not align with mine and I’m not giving any more money to the company.

David Riedman, PhD is the creator of the K-12 School Shooting Database, Chief Data Officer at a global risk management firm, and a tenure-track professor. Listen to my podcast—Riedman Report: Risk, AI, Education & Security—or my interviews on Freakonomics Radio and the New England Journal of Medicine.

Note: there is no content below this paywall line. Like all algorithmic media, Substack ranks paywalled content above free articles because they profit from paid content.

Keep reading with a 7-day free trial

Subscribe to Riedman Report: Risk, AI, Education, & Security to keep reading this post and get 7 days of free access to the full post archives.