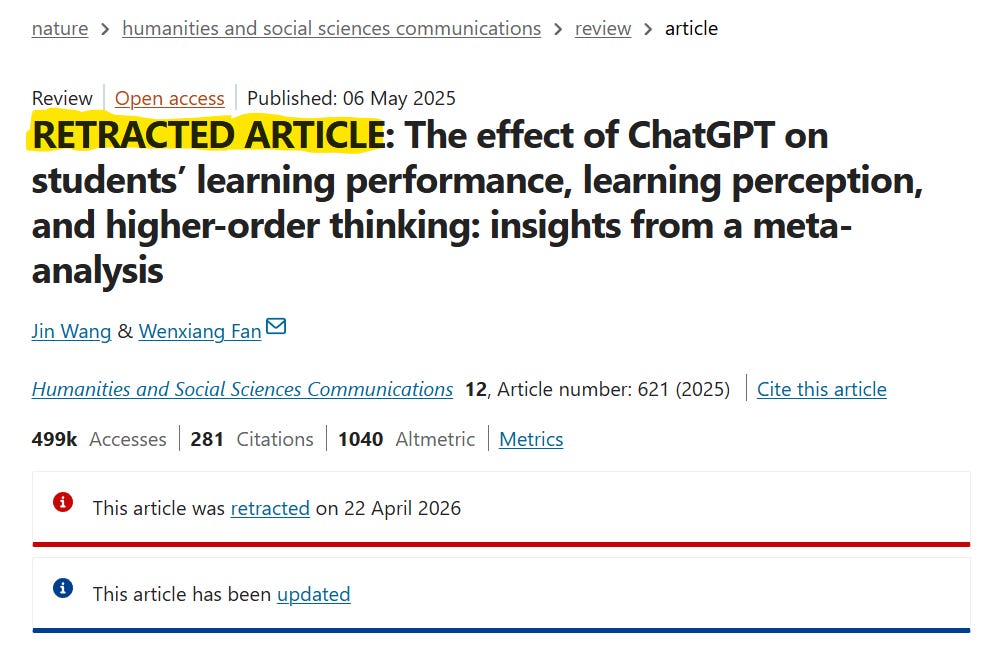

Retracted study claimed ChatGPT helps students learn

Paper in 'Nature' found that OpenAI’s ChatGPT can have a “large positive impact on improving learning performance” based on synthesizing very poor-quality studies and mixing together findings.

When Nature—one of the most prestigious academic journals in the world—publishes an article, the findings usually get mainstream news headlines. When that article is about the biggest tech trend and focuses on the product from a company with a multi-trillion dollar projected valuation, the article can have huge impacts across society.

For example, when a Nature paper claimed that ChatGPT enhances students’ learning performance and has the strongest effect when students use ChatGPT at school for 4-8 weeks straight, a teacher might decide to encourage students to use AI for every assignment all semester. The topic was hot enough to push the paper into the top 1% of Nature articles for reads (over 500k) and citations (~280 in the first year). For comparison, most academic papers get a couple dozen reads and are never cited by anyone else.

But when Nature retracts that article a year later, it’s barely a whisper and the damage is already done. I follow AI and education very closely and I didn’t even see this retraction until a week after it happened.

This May 2025 article claimed using OpenAI’s ChatGPT can have a “large positive impact on improving learning performance” and a “moderately positive impact on enhancing learning perception and fostering higher-order thinking.” The authors recommended that “ChatGPT should be actively integrated into different learning modes to enhance student learning, especially in problem-based learning.”

The retracted study made very specific educational claims including:

The broad use of ChatGPT at various grade levels and in different types of courses should be encouraged to support diverse learning needs

ChatGPT should be actively integrated into different learning modes to enhance student learning, especially in problem-based learning

Continuous use of ChatGPT should be ensured to support student learning, with a recommended duration of 4–8 weeks for more stable effects

ChatGPT should be flexibly integrated into teaching as an intelligent tutor, learning partner, and educational tool

The paper was retracted because “it was not an experimental study, but a meta analysis that synthesized the findings of 51 existing studies. It appears it was synthesizing very poor quality studies, or mixing together findings from studies that simply cannot be accurately compared due to very different methods, populations, and samples. It is not feasible that dozens of high-quality studies about ChatGPT and learning performance could have been conducted, reviewed, and published in that time. It really seemed like a paper that should not have been published in the first place.”

The retraction comes as the AI industry continues to make a heavy push to be integrated into k-12 and high ed classrooms. OpenAI has partnered with schools to provide students with free access to their AI tools. For example, OpenAI’s youtube channel has dozens of short algorithm-optimized partner videos with prominent universities to highlight how professors are using ChatGPT. Combine this with a major academic publication in Nature showing the benefits of using LLMs in the classroom and teachers would think they need to use ChatGPT or else their students will be left behind.

OpenAI, Anthropic, Google, and Microsoft are pouring millions of dollars into advertising, lobbying, and even donating $10,000,000 to teachers unions and $50,000,000 for higher ed research to bolster the adoption of their products in the classroom.

When schools are struggling for funding, teaching is difficult, students at every level are using LLMs to write their assignments, and kids are constantly online, OpenAI is capitalizing on a captive and desperate market.

I personally believe that there are limited benefits for students to be using LLMs at school because the models are programmed to randomly generate totally wrong answers to basic questions. The models also tell you that your ideas are great even when your ideas are awful and hopefully a human teacher would tell you the truth. The purpose of going to school is to learn how to think and outsourcing that process to an AI model is probably going to be very detrimental for students’ development.

If you missed my articles about the risks from OpenAI and unregulated LLMs like ChatGPT, Claude, Gemini, and others over the last 15 months:

Seven lawsuits filed after ChatGPT helped plot a school shooting in British Columbia

Is ChatGPT criminally responsible for the FSU school shooting?

ChatGPT helped the Canadian school shooter plot an attack, and OpenAI knew about it

ChatGPT amplifies harmful delusions with positive reinforcement

David Riedman, PhD is the creator of the K-12 School Shooting Database, Chief Data Officer at a global risk management firm, and a tenure-track professor. Listen to my podcast—Riedman Report: Risk, AI, Education & Security—or my interviews on Freakonomics Radio and the New England Journal of Medicine.